|

12/23/2023 0 Comments Aws chatbot documentation

Our Amazon integrations collect the following Amazon Service limits data to the provider ServiceLimits. There is one Trusted Advisor category of data: ServiceLimits. Service limits: Appears in New Relic with provider value ServiceLimits.You can query and explore your data using the TrustedAdvisorSample event type within one category of data: To view and use your integration data, go to > All capabilities > Infrastructure > AWS and select the AWS Trusted Advisor integration link. Trusted Advisor checks are automatically refreshed by AWS weekly only for customers with AWS Business or Enterprise Support plans. In order to have fresh data, New Relic programmatically sends refresh requests to AWS. You can change the polling frequency and filter data using configuration options.ĭefault polling information for the AWS Trusted Advisor integration:

You can find out more on Integrations and managed policies page. "Use the following context from the AWS Well-Architected Framework to answer the user's query.\nContext:\n"Īfterwards, the user prompt is the query, such as "How can I design resilient workloads?".This integration requires additional access permissions not covered by default managed policies. Then I added one more system prompt to use the context from the text embeddings search: Return your response in markdown, so you can bold and highlight important steps for customers.", Your role is to help customers understand best practices on building on AWS.

"You are an AWS Certified Solutions Architect. Here is what I used for the system prompt: To use the API, you have to create a prompt that leverages a "system" persona, and then take input from the user. OpenAI recently just released their ChatGPT API, which is 10x cheaper than their previous text generation API. Prompt engineering is about designing prompts that elicit the most relevant and desired response from a Large Language Model (LLM) such as GPT-3.Ĭrafting these prompts is an art that many are still figuring out, but a rule of thumb is the more detailed the prompt, the better the desired outcome. Here is an example of how the text looks as an embedding, a list of 1536 numbers that represent the text. This OpenAI Notebook provides a full end-to-end example of creating text embeddings. Small distances suggest high relatedness and large distances suggest low relatedness. The distance between two vectors measures their relatedness. Classification (where text strings are classified by their most similar label)Īn embedding is a vector (list) of floating-point numbers.Diversity measurement (where similarity distributions are analyzed).Anomaly detection (where outliers with little relatedness are identified).Recommendations (where items with related text strings are recommended).Clustering (where text strings are grouped by similarity).Search (where results are ranked by relevance to a query string).Text embeddings measure the relatedness of text strings. Next, I created text embeddings for each of the pages using These data cleaning steps helped to refine the raw data and enhance the model's overall performance, ultimately leading to more accurate and useful insights. By removing these short, context-less snippets, I ensured that the data fed into the model was both relevant and meaningful. This enabled the model to process the information effectively without losing any essential data.įiltering Out Short Texts: I also filtered out any text with less than 13 tokens, as these often comprised service names, like "Amazon S3," without any contextual information. Token Limitation: Since the model I used has a token limit of 5,000, I divided sections exceeding this limit into smaller chunks. This step facilitated better analysis and processing by the model. Text Normalization: To ensure consistency, I performed text normalization by converting all text to lowercase and removing any extra spaces or special characters. In this project, I focused on three primary data cleaning techniques:

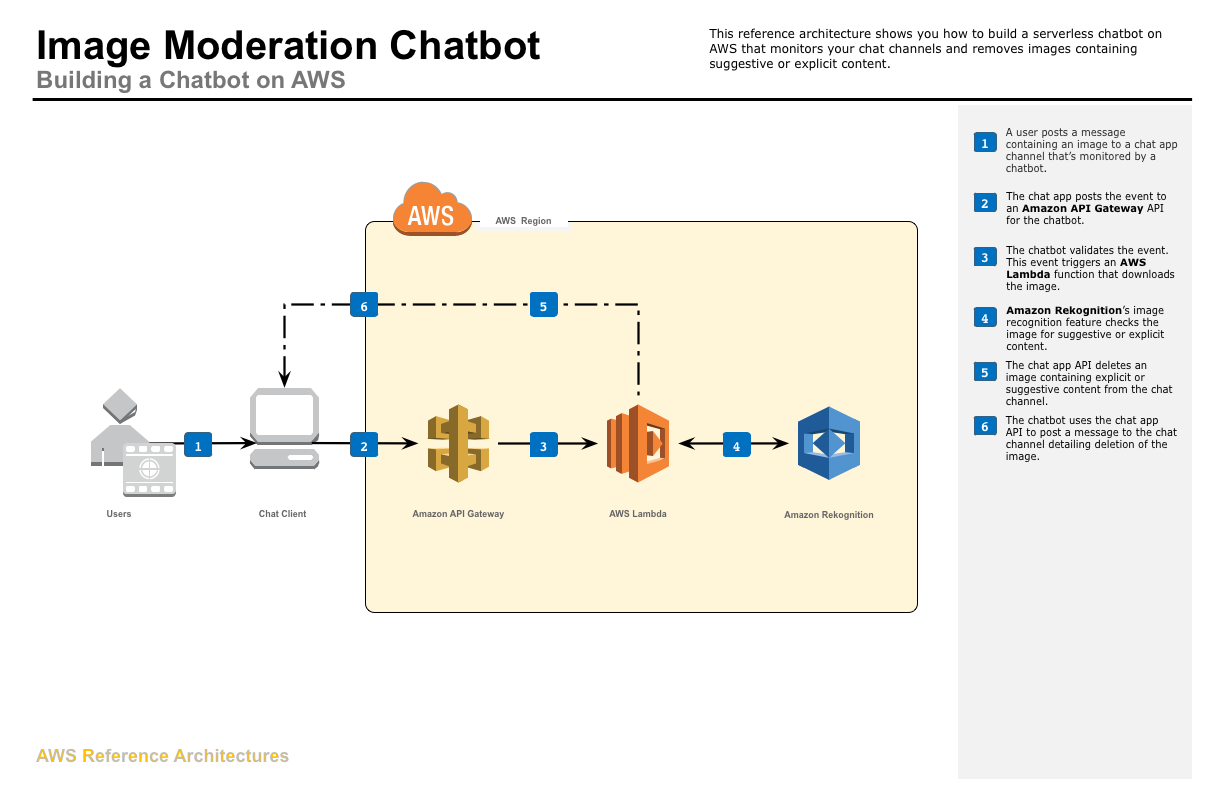

You must also configure a Slack channel, Microsoft Teams channel, or an Amazon Chime chatroom in AWS Chatbot. For more information, see Setting up and Create a notification rule. Before you configure integration with AWS Chatbot, you must configure a notification rule and a rule target. Data cleaning is a vital step in preparing scraped data for analysis, ensuring that the resulting insights are accurate, relevant, and meaningful. For more information, see the AWS Chatbot documentation.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed